Project Description

From REuP Project

| Revision as of 13:28, 14 July 2010 Apiotro (Talk | contribs) (→General concept) ← Previous diff |

Current revision Apiotro (Talk | contribs) (→General concept) |

||

| Line 7: | Line 7: | ||

| Currently an active research is performed in the domain of Application-Specific-Instruction set-Processors (ASIPs), in which the pipeline structure can be customized and utilized in the program through custom instructions. An extension to this approach is the No-Instruction-Set-Computer (NISC). The NISC compiler maps the application directly to the datapath [2]. It can achieve better parallelism and resource utilization than conventional instruction-set based compilers. Using this approach up to 70% speedup compared to an instruction-set based compiler has been achieved. The pipeline structure remains constant throughout the entire execution of the program. | Currently an active research is performed in the domain of Application-Specific-Instruction set-Processors (ASIPs), in which the pipeline structure can be customized and utilized in the program through custom instructions. An extension to this approach is the No-Instruction-Set-Computer (NISC). The NISC compiler maps the application directly to the datapath [2]. It can achieve better parallelism and resource utilization than conventional instruction-set based compilers. Using this approach up to 70% speedup compared to an instruction-set based compiler has been achieved. The pipeline structure remains constant throughout the entire execution of the program. | ||

| - | In this paper a completely novel solution is proposed. It exploits the parallelism of data processing and resources reuse of dynamically reconfigurable FPGAs, without any impact on development of new applications as well as migration (porting) of the existing ones. The proposed solution is an FPGA-based implementation of general-purpose processor, in which there are no sequentially executed instructions but all tasks are implemented in the reconfigurable hardware, in the completely automatic way. The whole array of configurable blocks can be modified at runtime, except for small part responsible for reconfiguration management. | + | In this project a completely novel solution is proposed. It exploits the parallelism of data processing and resources reuse of dynamically reconfigurable FPGAs, without any impact on development of new applications as well as migration (porting) of the existing ones. The proposed solution is an FPGA-based implementation of general-purpose processor, in which there are no sequentially executed instructions but all tasks are implemented in the reconfigurable hardware, in the completely automatic way. The whole array of configurable blocks can be modified at runtime, except for small part responsible for reconfiguration management. |

| The next chapter presents a main idea of fully reconfigurable general-purpose processor. Then some technical issues such as partitioning, software to hardware conversion and reconfiguration are discussed in more detail. Before the conclusions, some considerations dealing with adaptation of the operating system to the proposed processor architecture are included. | The next chapter presents a main idea of fully reconfigurable general-purpose processor. Then some technical issues such as partitioning, software to hardware conversion and reconfiguration are discussed in more detail. Before the conclusions, some considerations dealing with adaptation of the operating system to the proposed processor architecture are included. | ||

| + | |||

| ==General concept== | ==General concept== | ||

| - | Bearing in mind that the processor proposed in this paper is intended to be a general-purpose one, it should be able to execute any user application. Since this processor is a kind of fully reconfigurable FPGA, without any typical hard- nor even soft- processor core, the application to be executed should be stored in a memory as a set of bitstreams, which can implement its required functionality when loaded into FPGA in a correct sequence. The same must apply to the operating system, shared libraries, interrupt handlers, drivers, etc. Each bitstream must be loaded into FPGA on demand, when operations corresponding to this bitstream should be performed now or in the near future. This issue is explained in more detail in the next chapter. For general considerations it is enough to assume, that when the operations being performed by a given part of FPGA are finished, this part can be configured with a different bitstream, serving a new functionality. This means that the FPGA should be partially reconfigurable and should support very efficient reconfiguration techniques, which is discussed later. | + | Bearing in mind that the processor proposed in this project is intended to be a general-purpose one, it should be able to execute any user application. Since this processor is a kind of fully reconfigurable FPGA, without any typical hard- nor even soft- processor core, the application to be executed should be stored in a memory as a set of bitstreams, which can implement its required functionality when loaded into FPGA in a correct sequence. The same must apply to the operating system, shared libraries, interrupt handlers, drivers, etc. Each bitstream must be loaded into FPGA on demand, when operations corresponding to this bitstream should be performed now or in the near future. This issue is explained in more detail in the next chapter. For general considerations it is enough to assume, that when the operations being performed by a given part of FPGA are finished, this part can be configured with a different bitstream, serving a new functionality. This means that the FPGA should be partially reconfigurable and should support very efficient reconfiguration techniques, which is discussed later. |

| Devoting a part of FPGA for each thread allows running many threads concurrently (Figure 1), without time-division technique. This is a first source of data processing acceleration obtained in the proposed architecture. Obviously, similar acceleration is obtained in multi-core, traditional processors, but this approach is much more flexible and scaleable. | Devoting a part of FPGA for each thread allows running many threads concurrently (Figure 1), without time-division technique. This is a first source of data processing acceleration obtained in the proposed architecture. Obviously, similar acceleration is obtained in multi-core, traditional processors, but this approach is much more flexible and scaleable. | ||

| Line 17: | Line 18: | ||

| [[Image:Conc_threads.jpg|center|thumb|500px|Figure 1. Concurrent thread executions]] | [[Image:Conc_threads.jpg|center|thumb|500px|Figure 1. Concurrent thread executions]] | ||

| + | |||

| + | ==Thread partitioning== | ||

| + | It is obvious, that the functionality of most threads is too complex to be implemented outright in the FPGA at the same time. Therefore it must be split into the sequence of smaller pieces – partitions. The partitions can be in turn composed of several sub-units – operations, optionally finished with a jump (Figure 2). | ||

| + | [[Image:Spliting.jpg|center|thumb|400px|Figure 2. Thread splitting]] | ||

| + | The exact definition of the operation constitutes still an open issue and must be elaborated by means of some experiments. Currently, it seems that the operation can correspond to a basic block – a part of a program without any jumps out neither in, but probably, some basic blocks should be merged in one operation (e.g. for better loops optimization). To some extent the number of operations in the partition can be adjusted in order to obtain a required partition size. | ||

| + | |||

| + | The implementation of the partitions in FPGA should fulfill the following condition: before a given partition is finished, subsequent partition should be implemented (Figure 3). If a given partition is finishing its execution with a jump to one of two subsequent partitions, both partitions should be speculatively prepared for further execution. The implementation of subsequent partitions should take place as late as possible (ALAP). | ||

| + | |||

| + | If the partition is not active any longer, there are two possible solutions. This partition can be marked as stale (as soon as possible – ASAP) in order to release the occupied resources or it can remain implemented for later reuse. The second solution reduces the reconfiguration data transfer but on the other hand it makes the reconfiguration control process and the inter-partition data transfer more complex. | ||

| + | |||

| + | [[Image:Partitions.jpg|center|thumb|400px|Figure 3. Implementation of the partitions]] | ||

| + | |||

| + | In order to obtain the satisfying flexibility, it should be possible to place the partitions in any part of the FPGA. It means that the current, commercially available reconfiguration techniques, provided e.g. by Xilinx, cannot be efficiently used for this purpose. These techniques require the dynamic partition to be placed in a predefined area, surrounded with special communication blocks (Bus Macros) providing a predefined IO interface. | ||

| + | |||

| + | Another constraint regarding the partitions is that each branching partition must inform the configuration control module about the chosen branch. This can be obtained by setting a dedicated flag implemented inside the partition and reading it by the configuration control unit. | ||

| + | ==Software to hardware conversion== | ||

| + | |||

| + | The crucial point of the proposed general-purpose reconfigurable processor is an automatic transformation of any software operation into the hardware partition. Assuming that the thread to be partitioned is specified by means of any high-level programming language, e.g. C++, it can be converted to a set of corresponding bitstreams in three different ways (Figure 4). The first two ways are based on C++ to HDL conversion. There are many projects dealing with similar issues [3][4], but since it is a very complex problem, the results of these projects are still limited to some fractions of C++ standard and therefore they are not suitable for general-purpose processor implementation. | ||

| + | [[Image:Software-hardware.jpg|center|thumb|450px|Figure 4. C++ thread to set of bitstreams conversion]] | ||

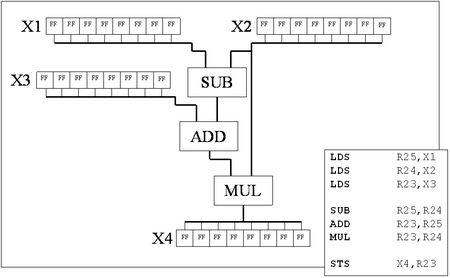

| + | The third approach, based on an intermediate code or an assembly language, seems to be much more efficient. First of all, it allows using advanced front-end tools, existing e.g. in a GNU Compiler, to reduce the complex, high-level programming structures to simple assembler instructions. Such a process, on the simple example, is depicted in Figure 5. | ||

| + | |||

| + | Each assembler instruction can be easily transformed into HDL description statements (Figure 6). The data dependencies as well as basic block boundaries can be extracted from the assembler code or from the compiler intermediate format (e.g. GIMPLE [5]). The compiler back-end tools can automatically generate an adequate HDL description (Figure 7). | ||

| + | |||

| + | [[Image:assembler.jpg|center|thumb|400px|Figure 5. Sample of assembler code generation]] | ||

| + | |||

| + | [[Image:asm-vhdl.jpg|center|thumb|400px|Figure 6. ASM to VHDL conversion]] | ||

| + | |||

| + | The synthesis process will generate an optimized hardware giving the functionality corresponding to the converted software operation. In this way, the results of many instructions requiring several clock cycles in the standard processor (data transfers, shifts), in the reconfigurable processor will be achieved by proper routing (Figure 8). Logic and arithmetic instructions will be performed by dedicated blocks. | ||

| + | |||

| + | [[Image:Vhdl-desc.jpg|center|thumb|450px|Figure 7. Adequate VHDL description]] | ||

| + | |||

| + | [[Image:hardware.jpg|center|thumb|450px|Figure 8. Generated hardware]] | ||

| + | |||

| + | Generating the operation-dedicated hardware constitutes a second source of data processing acceleration obtained in the proposed architecture. | ||

| + | |||

| + | The third source of this acceleration results from the processing parallelization. Independent logic or arithmetic instructions in the given software operation (horizontal parallelism [2]) will be implemented separately and executed concurrently, without any effort on the programmer's side. | ||

| + | |||

| + | During the hardware generating process the particular attention must be put on the length of combinational paths. Assuming a given clock synchronizing all dynamic partitions in the reconfigurable processor, the assembler to HDL converter can adapt the combinational paths to meet the clock period constraint. This can be achieved by inserting, if required, the intermediate register stages. Such structure not only allows meeting the timing requirements, but also allows implementing pipelined processing. This can be used to overlap operations from the subsequent iterations of the loop, taking the advantage of the vertical parallelism [2]. | ||

| + | |||

| + | It is also possible to leave the combinational paths as they are, setting a multi-cycle constraint on them. This allows using available time more efficiently, allowing individual operations to cross clock cycle boundaries. | ||

| + | |||

| + | The example of such optimization can be observed for the sample operation composed of the subtraction, addition and multiplication depicted in Figure 9. Assuming that the clock cycle period is 10 ns, addition and subtraction take 6 ns each, and multiplication takes 8 ns, the asynchronous execution of the presented operation requires 20 ns – two clock cycles. When not using the multi-cycle approach, this operation has to be divided into three pipeline stages, taking 30 ns. | ||

| + | |||

| + | [[Image:path-optim.jpg|center|thumb|450px|Figure 9. Long combinational paths optimization]] | ||

| + | |||

| + | ==Reconfiguration== | ||

| + | |||

| + | As mentioned above, the proposed processor cannot be efficiently implemented in commercially available reconfigurable devices. Dedicated ASIC is required. It should support high reconfiguration data transfer, with the possibility of free access to any part of the die. Reconfiguration restricted to just a row or column of existing resources, as it can be done in off-the-shelf FPGAs, increases the configuration data transfer and wastes the resources needlessly. | ||

| + | |||

| + | [[Image:dynamic.jpg|center|thumb|400px|Figure 10. Dynamic reconfiguration techniques: a) creeping partitions, b) multi-context reconfiguration]] | ||

| + | |||

| + | The dedicated ASIC should also efficiently support the external memory access, which appears to be a bottleneck in such parallel data processing, and the inter-partition data transfer. The memory access is considered in the next chapter. | ||

| + | |||

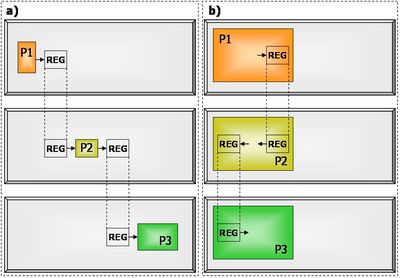

| + | Regarding the inter-partition data transfer, two scenarios are possible. The first one is based on creeping partitions (Figure 10a): each subsequent partition is implemented in the near neighborhood of the previous one. The output data of the previous partition, constituting the input data for the subsequent one, is stored in the registers implemented outside both partitions, but also in the near neighborhood of both of them in order to reduce the routing problems and the delays. | ||

| + | |||

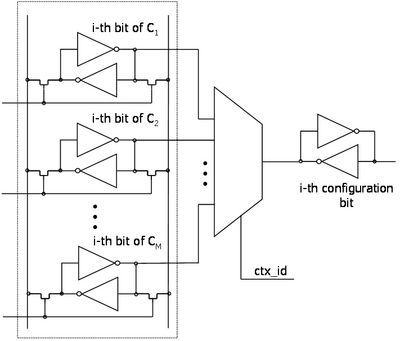

| + | The second solution is based on multi-context reconfiguration (Figure 11) [6]. In this approach each (i-th) configuration memory cell is connected – by means of a multiplexer – to M appropriate memory cells of the available contexts Cj. In these contexts M different configurations of resources can be stored. | ||

| + | |||

| + | [[Image:multi-context.jpg|center|thumb|400px|Figure 11. Multi-context reconfiguration]] | ||

| + | |||

| + | The context, which should be placed at a given moment in the configuration memory, referred to as the active context, is chosen by the context identifier signal denoted in Figure 11 by ctx_id. Because all required configuration bits could be replaced at the same time, in the parallel manner, the reconfiguration process is very fast and it does not depend on the size of the re-configured FPGA part. | ||

| + | |||

| + | Multi-context reconfiguration, due to its speed, allows using the same resources for two subsequent partitions. Furthermore, for non-branching partitions transferring the inter-partition data can be obtained by means of registers implemented inside the partitions (Figure 10b). The same registers, which are used to latch the output data of a given partition, can be used as a source of input data for the subsequent partition. In this way the resource utilization can be increased by exploiting unused registers inside the partition instead of allocating external ones. | ||

| + | |||

| + | Multi-context reconfiguration allows also optimizing the reconfiguration data transfer. Contexts for future reuse can remain in hidden memory cells, and new contexts can be prepared when the configuration data bus is not occupied. | ||

| + | |||

| + | ==Operating system== | ||

| + | New processor architecture would be incomplete without a dedicated operating system. Traditionally, an operating system is responsible for memory management, interrupts and hardware control, scheduling and inter-process communication (IPC). Many OSes have common programming interface and it is possible to run an application written on one OS on a different OS. The most known operating system interface is POSIX (Portable Operating System Interface) derived from Unix family. The operating system for the instructionless processor, called BitstreamOS, should also have this interface to make the application development independent from the underlying system architecture. | ||

| + | |||

| + | The BitstreamOS should be based on the standard OS concepts and it should provide: | ||

| + | * interrupt management | ||

| + | * memory management | ||

| + | * scheduling and placement | ||

| + | * inter-process communication and synchronization | ||

| + | |||

| + | Proposed platform will be very different from platforms based on the commonly used processors (RISC or CISC), thus the BitstreamOS should have a different architecture then other known OSes. The main difference is that BitstreamOS will not manage applications written for the sequential processor but will load the bitstreams corresponding to applications. This issue changes the way in which programs will be running and how the BitstreamOS will manage them. The BitstreamOS has to manage partition bitstreams like common OSes manage virtual memory. The scheduling is more complex than on the RISC or CISC processor. Therefore, the BitstreamOS has to provide more functionality in addition to the standard OS: | ||

| + | * loading and unloading partition bitstreams | ||

| + | * scheduling partitions to fully utilize the reprogrammable array | ||

| + | * arranging the communication channels between partitions if needed | ||

| + | * serializing memory accesses | ||

| + | |||

| + | One of the purposes of the Control Unit is to provide the memory management interface for BitstreamOS. This interface should consist of address and data bus to the external memory, to the cache memory for partition bitstreams and to the cache memory for data. BitstreamOS should be responsible for management and serialization of memory request accesses. | ||

| + | |||

| + | An interrupt controller should be also a part of Control Unit and it should provide a dedicated interface for the BitstreamOS to designate partitions as interrupt handlers (Interrupt Service Routine – ISR). An ISR may not be present in hardware all the time – loading it is yet another task for the operating system. | ||

| + | |||

| + | Scheduling, placement and routing will be the most complex tasks for BitstreamOS. These tasks should consider: | ||

| + | * priorities of partitions | ||

| + | * sizes of partitions | ||

| + | * dependencies among the partitions (IPC communication, synchronization) | ||

| + | * memory access requests of partitions | ||

| + | * locked partitions | ||

| + | |||

| + | It must be stressed that the placement is understood here as a selection of the appropriate location of the entire partitions in the fabric. The placement in the traditional sense is performed during the bitstream generation phase. Similarly, the routing connects the interfaces of the internally routed partitions. | ||

| + | |||

| + | ==Conclusions== | ||

| + | In this project an idea of the general-purpose processor implemented in the dynamically reconfigurable FPGA has been presented. The novelty of the proposed solution lays in the lack of typical sequential processing – all operations are realized in the hardware. Due to the proposed software to hardware conversion method, the introduced architecture, despite being significantly different than the native for standard processors, does not impose any modification of the software development process. In addition, the automatic data processing parallelization is built in the proposed conversion method and does not require any effort from the programmer side. | ||

| + | |||

| + | Generating the hardware optimized for any operation (set of instructions) places the proposed processor among no-instruction-set architectures and can come to fruition with the new quality, as far as the processing speed is concerned. Obviously, the efficient implementation of such processor requires specific integrated circuit to be designed, since the current off-the-shelf reconfigurable devices do not offer the demanded reconfiguration flexibility. | ||

| + | |||

| + | The proposed general-purpose processor architecture is in the basic concept phase and still many aspects of this idea must be carefully analyzed. Such issues as optimal partition size, an efficient reconfiguration method and the smallest block, which can be independently reconfigured must be experimentally verified. | ||

| + | |||

| + | ==References== | ||

| + | |||

| + | [1] H. K.-H. So, R. Brodersen, “A unified hardware/software runtime environment for FPGA-based reconfigurable computers using BORPH”, Transactions on Embedded Computing Systems, vol. 7(2), pp 1-28, February 2008. | ||

| + | |||

| + | [2] M. Reshadi, B. Gorjiara, D. Gajski, “Utilizing Horizontal and Vertical Parallelism with a No-Instruction-Set Compiler for Custom Datapaths”, Proceedings of the 2005 International Conference on Computer Design, pp. 69-76, San Jose, CA, USA, October 2005 | ||

| + | |||

| + | [3] D. Jain, “Object Oriented Programming Constructs' in VHSIC Hardware Description Language, Why & How”, Journal of Theoretical and Applied Information Technology, vol 3(1), pp. 30-36, 2005 | ||

| + | |||

| + | [4] A. Virginia, Y.D. Yankova, K Bertels, “An empirical comparison of ANSI-C to VHDL compilers: SPARK, ROCCC and DWARV”, Annual Workshop on Circuits, Systems and Signal Processing, ProRISC 2007, pp. 388 - 394, Utrecht, 2007 | ||

| + | |||

| + | [5] J. Merrill, "GENERIC and GIMPLE: A New Tree Representation for Entire Functions", Proceedings of the GCC Developers’ Summit 2003, pp. 171-179, Ottawa, Ontario, Canada, May 2003 | ||

| + | |||

| + | [6] J. Faura, J.M. Moreno, J. Madrenas, J.M. Insenser, “VHDL Modelling of Fast Dynamic Reconfiguration on Novel Multicontext RAM-based Field Programmable Devices”, Proceedings of the SIG-VHDL Spring'97 Working Conference, pp. 171-177, Toledo, Spain, April 1997 | ||

Current revision

Contents |

Instructionless processor architecture using dynamically reconfigurable logic

Abstract

In this project we would like to propose the idea of the general-purpose processor implemented in dynamically reconfigurable FPGA. The novelty of the presented solution lays in the lack of typical sequential processing – all operations are realized in parallel in the hardware. At the same time the new architecture does not impose any modification of the software development process.

Introduction

For many years the applications of FPGA devices have not been limited only to prototyping or to small volume production. Since the dynamic reconfiguration techniques of FPGAs are commercially available, there are different concepts of using them for acceleration of many types of computations. Initially, the FPGAs were used as coprocessors supporting conventional sequential processors in the most demanding calculations, where the parallel data processing inside the FPGA was very helpful. Since advanced FPGAs are equipped with processor cores, the sequential processing and parallel coprocessing can be implemented in a single die, significantly alleviating the interconnection problems between these two modules. In [1] a slightly modified UNIX OS running on PowerPC of Virtex allows programmers to treat the tasks implemented in the reconfigurable hardware in the same way as software tasks running on PowerPC. Nevertheless, developing a new or porting an existing application to use such a powerful architecture efficiently requires a quite low-level programming approach, which is far from current standards and trends.

Currently an active research is performed in the domain of Application-Specific-Instruction set-Processors (ASIPs), in which the pipeline structure can be customized and utilized in the program through custom instructions. An extension to this approach is the No-Instruction-Set-Computer (NISC). The NISC compiler maps the application directly to the datapath [2]. It can achieve better parallelism and resource utilization than conventional instruction-set based compilers. Using this approach up to 70% speedup compared to an instruction-set based compiler has been achieved. The pipeline structure remains constant throughout the entire execution of the program.

In this project a completely novel solution is proposed. It exploits the parallelism of data processing and resources reuse of dynamically reconfigurable FPGAs, without any impact on development of new applications as well as migration (porting) of the existing ones. The proposed solution is an FPGA-based implementation of general-purpose processor, in which there are no sequentially executed instructions but all tasks are implemented in the reconfigurable hardware, in the completely automatic way. The whole array of configurable blocks can be modified at runtime, except for small part responsible for reconfiguration management. The next chapter presents a main idea of fully reconfigurable general-purpose processor. Then some technical issues such as partitioning, software to hardware conversion and reconfiguration are discussed in more detail. Before the conclusions, some considerations dealing with adaptation of the operating system to the proposed processor architecture are included.

General concept

Bearing in mind that the processor proposed in this project is intended to be a general-purpose one, it should be able to execute any user application. Since this processor is a kind of fully reconfigurable FPGA, without any typical hard- nor even soft- processor core, the application to be executed should be stored in a memory as a set of bitstreams, which can implement its required functionality when loaded into FPGA in a correct sequence. The same must apply to the operating system, shared libraries, interrupt handlers, drivers, etc. Each bitstream must be loaded into FPGA on demand, when operations corresponding to this bitstream should be performed now or in the near future. This issue is explained in more detail in the next chapter. For general considerations it is enough to assume, that when the operations being performed by a given part of FPGA are finished, this part can be configured with a different bitstream, serving a new functionality. This means that the FPGA should be partially reconfigurable and should support very efficient reconfiguration techniques, which is discussed later.

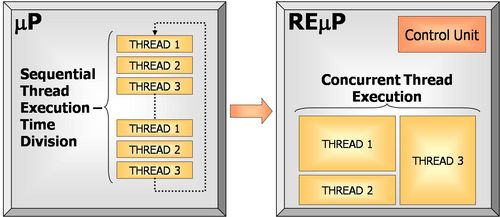

Devoting a part of FPGA for each thread allows running many threads concurrently (Figure 1), without time-division technique. This is a first source of data processing acceleration obtained in the proposed architecture. Obviously, similar acceleration is obtained in multi-core, traditional processors, but this approach is much more flexible and scaleable.

To manage the thread executions (in other words: to load the required bitstreams into the proper FPGA partitions) a special Control Unit is required. This block is the only static module in the FPGA – its configuration cannot be modified at runtime. It must be stressed, that this block does not need to perform any kind of scheduling. The required dependencies between bitstreams should be determined during the bitstream set generation process and stored, together with these bitstreams, in memory. The control block can only suspend a thread execution if there is no place for loading of the new bitstream.

Thread partitioning

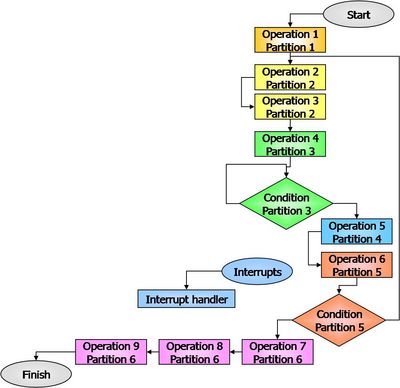

It is obvious, that the functionality of most threads is too complex to be implemented outright in the FPGA at the same time. Therefore it must be split into the sequence of smaller pieces – partitions. The partitions can be in turn composed of several sub-units – operations, optionally finished with a jump (Figure 2).

The exact definition of the operation constitutes still an open issue and must be elaborated by means of some experiments. Currently, it seems that the operation can correspond to a basic block – a part of a program without any jumps out neither in, but probably, some basic blocks should be merged in one operation (e.g. for better loops optimization). To some extent the number of operations in the partition can be adjusted in order to obtain a required partition size.

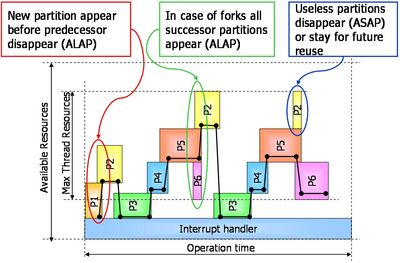

The implementation of the partitions in FPGA should fulfill the following condition: before a given partition is finished, subsequent partition should be implemented (Figure 3). If a given partition is finishing its execution with a jump to one of two subsequent partitions, both partitions should be speculatively prepared for further execution. The implementation of subsequent partitions should take place as late as possible (ALAP).

If the partition is not active any longer, there are two possible solutions. This partition can be marked as stale (as soon as possible – ASAP) in order to release the occupied resources or it can remain implemented for later reuse. The second solution reduces the reconfiguration data transfer but on the other hand it makes the reconfiguration control process and the inter-partition data transfer more complex.

In order to obtain the satisfying flexibility, it should be possible to place the partitions in any part of the FPGA. It means that the current, commercially available reconfiguration techniques, provided e.g. by Xilinx, cannot be efficiently used for this purpose. These techniques require the dynamic partition to be placed in a predefined area, surrounded with special communication blocks (Bus Macros) providing a predefined IO interface.

Another constraint regarding the partitions is that each branching partition must inform the configuration control module about the chosen branch. This can be obtained by setting a dedicated flag implemented inside the partition and reading it by the configuration control unit.

Software to hardware conversion

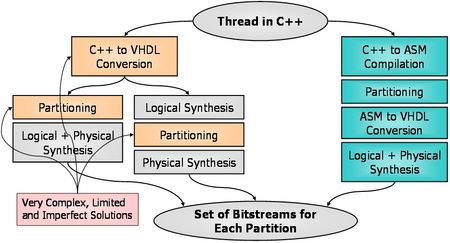

The crucial point of the proposed general-purpose reconfigurable processor is an automatic transformation of any software operation into the hardware partition. Assuming that the thread to be partitioned is specified by means of any high-level programming language, e.g. C++, it can be converted to a set of corresponding bitstreams in three different ways (Figure 4). The first two ways are based on C++ to HDL conversion. There are many projects dealing with similar issues [3][4], but since it is a very complex problem, the results of these projects are still limited to some fractions of C++ standard and therefore they are not suitable for general-purpose processor implementation.

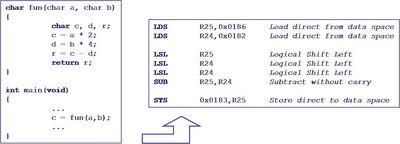

The third approach, based on an intermediate code or an assembly language, seems to be much more efficient. First of all, it allows using advanced front-end tools, existing e.g. in a GNU Compiler, to reduce the complex, high-level programming structures to simple assembler instructions. Such a process, on the simple example, is depicted in Figure 5.

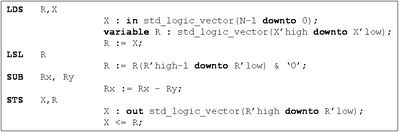

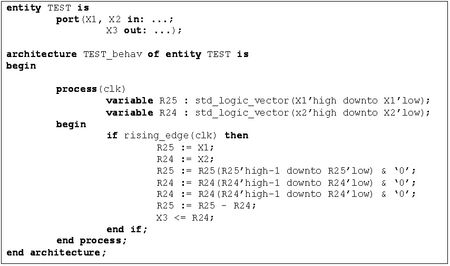

Each assembler instruction can be easily transformed into HDL description statements (Figure 6). The data dependencies as well as basic block boundaries can be extracted from the assembler code or from the compiler intermediate format (e.g. GIMPLE [5]). The compiler back-end tools can automatically generate an adequate HDL description (Figure 7).

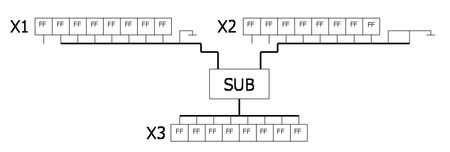

The synthesis process will generate an optimized hardware giving the functionality corresponding to the converted software operation. In this way, the results of many instructions requiring several clock cycles in the standard processor (data transfers, shifts), in the reconfigurable processor will be achieved by proper routing (Figure 8). Logic and arithmetic instructions will be performed by dedicated blocks.

Generating the operation-dedicated hardware constitutes a second source of data processing acceleration obtained in the proposed architecture.

The third source of this acceleration results from the processing parallelization. Independent logic or arithmetic instructions in the given software operation (horizontal parallelism [2]) will be implemented separately and executed concurrently, without any effort on the programmer's side.

During the hardware generating process the particular attention must be put on the length of combinational paths. Assuming a given clock synchronizing all dynamic partitions in the reconfigurable processor, the assembler to HDL converter can adapt the combinational paths to meet the clock period constraint. This can be achieved by inserting, if required, the intermediate register stages. Such structure not only allows meeting the timing requirements, but also allows implementing pipelined processing. This can be used to overlap operations from the subsequent iterations of the loop, taking the advantage of the vertical parallelism [2].

It is also possible to leave the combinational paths as they are, setting a multi-cycle constraint on them. This allows using available time more efficiently, allowing individual operations to cross clock cycle boundaries.

The example of such optimization can be observed for the sample operation composed of the subtraction, addition and multiplication depicted in Figure 9. Assuming that the clock cycle period is 10 ns, addition and subtraction take 6 ns each, and multiplication takes 8 ns, the asynchronous execution of the presented operation requires 20 ns – two clock cycles. When not using the multi-cycle approach, this operation has to be divided into three pipeline stages, taking 30 ns.

Reconfiguration

As mentioned above, the proposed processor cannot be efficiently implemented in commercially available reconfigurable devices. Dedicated ASIC is required. It should support high reconfiguration data transfer, with the possibility of free access to any part of the die. Reconfiguration restricted to just a row or column of existing resources, as it can be done in off-the-shelf FPGAs, increases the configuration data transfer and wastes the resources needlessly.

The dedicated ASIC should also efficiently support the external memory access, which appears to be a bottleneck in such parallel data processing, and the inter-partition data transfer. The memory access is considered in the next chapter.

Regarding the inter-partition data transfer, two scenarios are possible. The first one is based on creeping partitions (Figure 10a): each subsequent partition is implemented in the near neighborhood of the previous one. The output data of the previous partition, constituting the input data for the subsequent one, is stored in the registers implemented outside both partitions, but also in the near neighborhood of both of them in order to reduce the routing problems and the delays.

The second solution is based on multi-context reconfiguration (Figure 11) [6]. In this approach each (i-th) configuration memory cell is connected – by means of a multiplexer – to M appropriate memory cells of the available contexts Cj. In these contexts M different configurations of resources can be stored.

The context, which should be placed at a given moment in the configuration memory, referred to as the active context, is chosen by the context identifier signal denoted in Figure 11 by ctx_id. Because all required configuration bits could be replaced at the same time, in the parallel manner, the reconfiguration process is very fast and it does not depend on the size of the re-configured FPGA part.

Multi-context reconfiguration, due to its speed, allows using the same resources for two subsequent partitions. Furthermore, for non-branching partitions transferring the inter-partition data can be obtained by means of registers implemented inside the partitions (Figure 10b). The same registers, which are used to latch the output data of a given partition, can be used as a source of input data for the subsequent partition. In this way the resource utilization can be increased by exploiting unused registers inside the partition instead of allocating external ones.

Multi-context reconfiguration allows also optimizing the reconfiguration data transfer. Contexts for future reuse can remain in hidden memory cells, and new contexts can be prepared when the configuration data bus is not occupied.

Operating system

New processor architecture would be incomplete without a dedicated operating system. Traditionally, an operating system is responsible for memory management, interrupts and hardware control, scheduling and inter-process communication (IPC). Many OSes have common programming interface and it is possible to run an application written on one OS on a different OS. The most known operating system interface is POSIX (Portable Operating System Interface) derived from Unix family. The operating system for the instructionless processor, called BitstreamOS, should also have this interface to make the application development independent from the underlying system architecture.

The BitstreamOS should be based on the standard OS concepts and it should provide:

- interrupt management

- memory management

- scheduling and placement

- inter-process communication and synchronization

Proposed platform will be very different from platforms based on the commonly used processors (RISC or CISC), thus the BitstreamOS should have a different architecture then other known OSes. The main difference is that BitstreamOS will not manage applications written for the sequential processor but will load the bitstreams corresponding to applications. This issue changes the way in which programs will be running and how the BitstreamOS will manage them. The BitstreamOS has to manage partition bitstreams like common OSes manage virtual memory. The scheduling is more complex than on the RISC or CISC processor. Therefore, the BitstreamOS has to provide more functionality in addition to the standard OS:

- loading and unloading partition bitstreams

- scheduling partitions to fully utilize the reprogrammable array

- arranging the communication channels between partitions if needed

- serializing memory accesses

One of the purposes of the Control Unit is to provide the memory management interface for BitstreamOS. This interface should consist of address and data bus to the external memory, to the cache memory for partition bitstreams and to the cache memory for data. BitstreamOS should be responsible for management and serialization of memory request accesses.

An interrupt controller should be also a part of Control Unit and it should provide a dedicated interface for the BitstreamOS to designate partitions as interrupt handlers (Interrupt Service Routine – ISR). An ISR may not be present in hardware all the time – loading it is yet another task for the operating system.

Scheduling, placement and routing will be the most complex tasks for BitstreamOS. These tasks should consider:

- priorities of partitions

- sizes of partitions

- dependencies among the partitions (IPC communication, synchronization)

- memory access requests of partitions

- locked partitions

It must be stressed that the placement is understood here as a selection of the appropriate location of the entire partitions in the fabric. The placement in the traditional sense is performed during the bitstream generation phase. Similarly, the routing connects the interfaces of the internally routed partitions.

Conclusions

In this project an idea of the general-purpose processor implemented in the dynamically reconfigurable FPGA has been presented. The novelty of the proposed solution lays in the lack of typical sequential processing – all operations are realized in the hardware. Due to the proposed software to hardware conversion method, the introduced architecture, despite being significantly different than the native for standard processors, does not impose any modification of the software development process. In addition, the automatic data processing parallelization is built in the proposed conversion method and does not require any effort from the programmer side.

Generating the hardware optimized for any operation (set of instructions) places the proposed processor among no-instruction-set architectures and can come to fruition with the new quality, as far as the processing speed is concerned. Obviously, the efficient implementation of such processor requires specific integrated circuit to be designed, since the current off-the-shelf reconfigurable devices do not offer the demanded reconfiguration flexibility.

The proposed general-purpose processor architecture is in the basic concept phase and still many aspects of this idea must be carefully analyzed. Such issues as optimal partition size, an efficient reconfiguration method and the smallest block, which can be independently reconfigured must be experimentally verified.

References

[1] H. K.-H. So, R. Brodersen, “A unified hardware/software runtime environment for FPGA-based reconfigurable computers using BORPH”, Transactions on Embedded Computing Systems, vol. 7(2), pp 1-28, February 2008.

[2] M. Reshadi, B. Gorjiara, D. Gajski, “Utilizing Horizontal and Vertical Parallelism with a No-Instruction-Set Compiler for Custom Datapaths”, Proceedings of the 2005 International Conference on Computer Design, pp. 69-76, San Jose, CA, USA, October 2005

[3] D. Jain, “Object Oriented Programming Constructs' in VHSIC Hardware Description Language, Why & How”, Journal of Theoretical and Applied Information Technology, vol 3(1), pp. 30-36, 2005

[4] A. Virginia, Y.D. Yankova, K Bertels, “An empirical comparison of ANSI-C to VHDL compilers: SPARK, ROCCC and DWARV”, Annual Workshop on Circuits, Systems and Signal Processing, ProRISC 2007, pp. 388 - 394, Utrecht, 2007

[5] J. Merrill, "GENERIC and GIMPLE: A New Tree Representation for Entire Functions", Proceedings of the GCC Developers’ Summit 2003, pp. 171-179, Ottawa, Ontario, Canada, May 2003

[6] J. Faura, J.M. Moreno, J. Madrenas, J.M. Insenser, “VHDL Modelling of Fast Dynamic Reconfiguration on Novel Multicontext RAM-based Field Programmable Devices”, Proceedings of the SIG-VHDL Spring'97 Working Conference, pp. 171-177, Toledo, Spain, April 1997